NN Model

What is a NN Model?

A neural network model or neural network is a technique used to approximate an unknown function using historical data or observations from a domain.

Neural networks belong to the class of supervised machine learning algorithms, and try to resemble the way a human brain works. They consist of a set of connected input/output nodes, where each connection has a weight associated with it. All inputs are modified by these weights and summed. An activation function defines the magnitude of each node’s output and allows to normalize it, usually within the range -1 to 1.

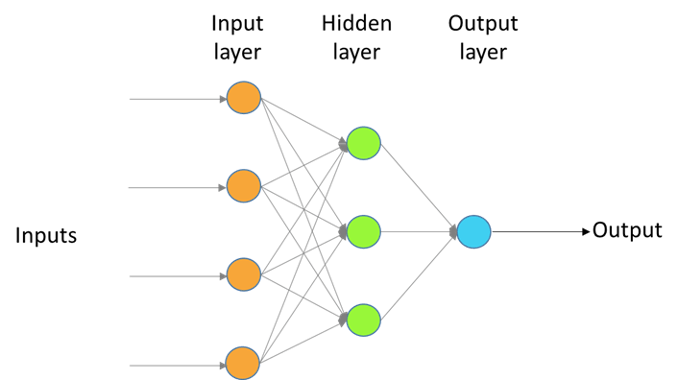

Figure 1: representation of a neural network with one hidden layer.

A neural network is organized in layers, each containing several nodes. The first layer is called “input layer”, and the last “output layer”. The layers in between are called “hidden layers”.

Neural networks are mathematically described by the Universal Approximation Theorem. This theorem explains that a feedforward network with a linear output layer and at least one hidden layer with a “squashing” activation function can approximate any function with a specified error, given that the hidden layer has enough nodes. It was first proved by George Cybenko.

There are many types of neural network models, such as feedforward neural networks, recurrent neural networks, and convolutional neural networks. Neural networks with several hidden layers form a special body of knowledge known as deep learning.

Why are NN Models Important?

NN models have acquired great importance in recent times with the advent of big data. The large amounts of data that is continuously being accumulated find expression in these models. Their applicability is very wide, from science to engineering to health to finance. The list keeps growing as more and more data is obtained from different sources.

Advances in robotic science and IoT has provided a new ground for neural network models, where they are used in vision, picture analysis, natural language processing, and more.

NN Models + LogicPlum

NN models are at the heart of LogicPlum’s platform. Together with automation, they form the foundations of its modeling capacity. Automation allows users to concentrate on their fields of expertise, knowing that they are obtaining the best possible model.

Guide to further reading

For those wanting to read George Cybenko’s original paper:

Cybenko, G. Approximation by Superpositions of a Sigmoidal Function. Math. Control Signals Systems (1989) 2:303-314. Available at http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.441.7873&rep=rep1&type=pdf

For those who are interested in exploring applied neural network modeling:

| Authors | NN Model Topics |

|---|---|

| NN Model Topics | Predicting Neural Network Dynamics via Graphical Analysis |

| Russell W. Anderson | Biased Random-Walk Learning: A Neurobiological Correlate to Trial-and-Error |

| Tuul Triyason | VoIP Quality Prediction Model by Bio-Inspired Methods |

| Gilbert Lim | Technical and clinical challenges of A.I. in retinal image analysis |

| Knut Kvaal and Jean A. McEwan | Multivariate analysis of data in sensory science |

| Koyel Chakraborty | Sentiment Analysis on a Set of Movie Reviews Using Deep Learning Techniques |